Academic researchers in Northern California are using machine learning and other cutting-edge technologies to help diagnose and treat hearing impairment. The researchers’ work can generate a lot of data — anywhere from 50GB to 100GB on a given day — which was putting a considerable strain on their storage resources.

“Our storage situation was less than ideal,” said Britt Yazel, a researcher and Ph.D candidate at the Center for Mind and Brain at UC Davis. “We had a terabyte of internal storage in our desktop computers, and we were transferring data back and forth on flash drives all day. It was becoming a nightmare just keeping all of our data organized and accessible.”

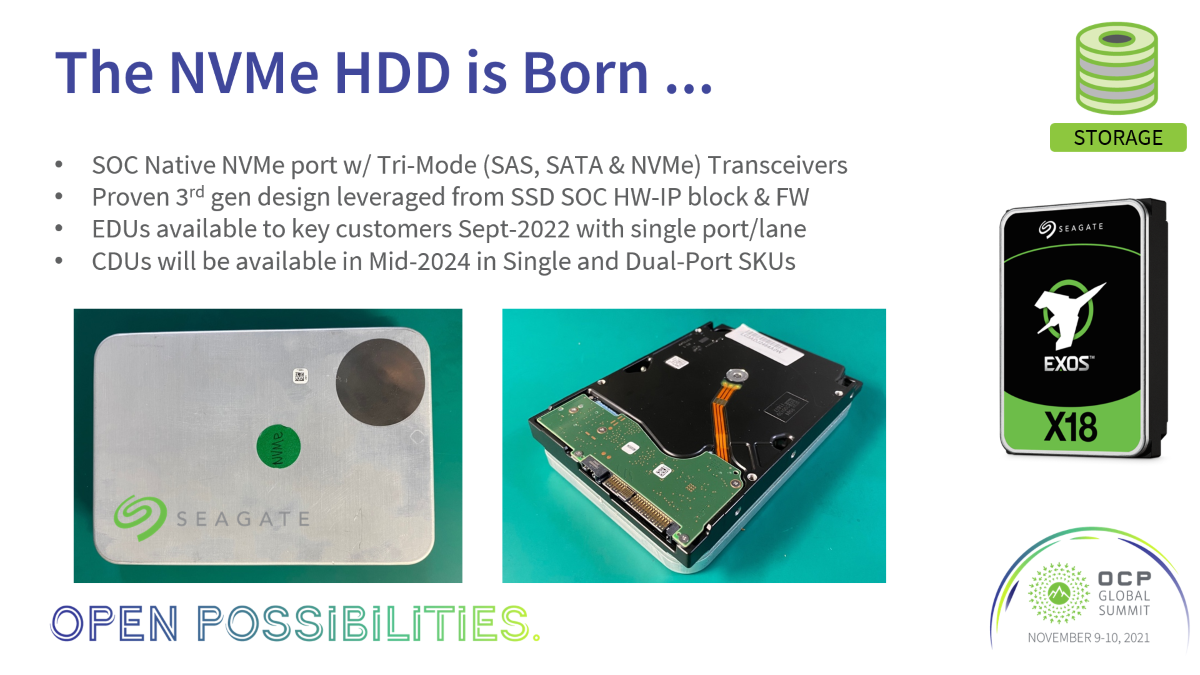

The lab had inherited a server from another university department; equipped with Seagate hard drives, researchers hoped it could provide a more efficient data storage solution.

Critical first step: migrating data from PCs to servers to increase performance

“We now have 20TB of storage running in our server, which is incredible,” Yazel said. “We’ve migrated all of our data from our PCs over to the server and we’re seeing great performance. Our write speeds are now at 140MB per second, which is about triple what we had before.”

“We now have 20TB of storage running in our server, which is incredible,” Yazel said. “We’ve migrated all of our data from our PCs over to the server and we’re seeing great performance. Our write speeds are now at 140MB per second, which is about triple what we had before.”

Fast system performance and ample storage capacity are critical to helping researchers at the Center for Mind and Brain manage their data more effectively and streamline their workflow.

“We’re creating a ton of data in our research, using machine-learning algorithms to help us better understand hearing loss and improve upon the existing technology that’s out there to treat it,” said Yazel. “All of that data is hugely important to our work, but it doesn’t mean anything unless we can quickly access it, analyze it and share it with our entire team.”

How to pinpoint hearing deficits by analyzing brain activity data

The UC Davis team works to locate the areas in the brain where auditory deficits lie in order to treat hearing loss most effectively. To do that, the researchers record brain activity using high-frequency/high-density EEG (electroencephalography) equipment. The EEG recordings can take two-to-three hours in a single session; the researchers record up to 128 electro channels in a frequency of 16,000 Hz, which is far greater than traditional uses of the technology. Each hour of recording can generate as much as 10GB of data.

The lab’s computers run independent component analysis, allowing researchers to break a “spaghetti mess” of signals into many extracted components, each of which is a single source of electrical signal. “After splitting them apart, we can look at them to try to determine where hearing loss is happening,” Yazel said.

Apart from diagnosing hearing loss, the lab also is interested in new ways to combat hearing loss.

New potential to improve audio prosthesis technology

“People wearing hearing aids have a hard time mentally blocking out all that noise to focus on a specific voice,” Yazel said. “We call this the ‘cocktail party’ problem. We feel there’s much room for improvement with auditory prostheses.”

To address these problems, the lab is using machine learning and specialized software and hardware from Intel and Nvidia as well as Seagate, allowing them to focus on improvements to traditional hearing devices. The research team hopes that through the use of machine learning, they can help create a new class of assistive-hearing device that can “intelligently separate signal from noise,” Yazel explained.

Managing data will continue to play a huge part in the UC Davis team’s research efforts.

“Data is becoming more and more important to our work,” said Dr. Lee Miller, associate professor at the Center for Mind and Brain. “Data helps our team find new ways to diagnose and treat hearing loss. It’s the foundation for all of our innovation, and that’s why it’s so important for us to have fast, reliable and high-capacity storage solutions.”