Facebook’s Bryce Canyon Meets New Storage Capacity and Density Challenges

Earlier this month, Jason Adrian of Facebook Engineering shared the design for Bryce Canyon, the company’s next-generation storage platform. It’s an exciting advancement and I encourage you to check it out. Their team has contributed it to the Open Compute Project (OCP) so everyone can benefit. (OCP is a consortium that aims to design open, efficient computing infrastructures, and includes companies like Seagate, Intel, Apple, Dell, Microsoft, and leaders in industries that depend on advanced datacenter technologies such as Fidelity, Verizon, AT&T and Bank of America). Adrian’s post does a nice job of tracing the evolution from Knox in 2013 to today as well. You can trace how both the technology evolved and Facebook’s storage needs changed as it grew.

What’s Changing?

Facebook, like many companies, faces a data deluge — theirs is just more extreme. The company’s new storage design reflects how people are using the platform today. For Facebook that means billions of photos and video posted, shared, and sent. And the way we use photos and video is constantly evolving. Consider that the millions of drones filming 4K video today were unknown a few short years ago. Virtual and augmented reality are both data-intensive technologies as well. According to web sources, Facebook already stores multiple exabytes of data. That’s millions of terabytes – and growing exponentially. The absolute size is remarkable. Cloud providers like Facebook are experiencing 3x growth every year and the rate of growth is only accelerating as new applications and demands emerge. Bryce Canyon is meant to meet that challenge.

It’s All About High-Density Storage

What would do you do if the data you need to store is growing 50 percent every year, but your data center budget is only increasing by single digits? You definitely don’t want the real estate dedicated to that data center to grow at anywhere near that 50 percent annual rate. The better route for TCO (total cost of ownership) is via increasing storage density, and in the case of Bryce Canyon, you get a 20 percent HDD density boost.

Traditionally, storage enclosures came in standard sizes that could fit 14 to 16 HDDs. Today’s high-density storage systems, such as Bryce Canyon, can hold 72 HDDs in the same space. That’s the difference between a rack that holds fewer than 200 drives and one that holds 700 to 800 drives. Facebook’s design provides choices, from a single 72-drive storage server, as dual 36-drive storage servers with fully independent power paths, or as a 36/72-drive JBOD (just a bunch of disks). This modular approach simplifies data center operations by reducing the number of storage platform configurations needed.

Traditionally, storage enclosures came in standard sizes that could fit 14 to 16 HDDs. Today’s high-density storage systems, such as Bryce Canyon, can hold 72 HDDs in the same space. That’s the difference between a rack that holds fewer than 200 drives and one that holds 700 to 800 drives. Facebook’s design provides choices, from a single 72-drive storage server, as dual 36-drive storage servers with fully independent power paths, or as a 36/72-drive JBOD (just a bunch of disks). This modular approach simplifies data center operations by reducing the number of storage platform configurations needed.

This industry improvement in density, coupled with the improvements Seagate has pioneered in the areal density of the HDD itself, means a rack full of Bryce Canyons with Seagate’s 12TB Enterprise Capacity (Helium) hard drives could store ~10PB of data.

Modular, flexible, and scalable

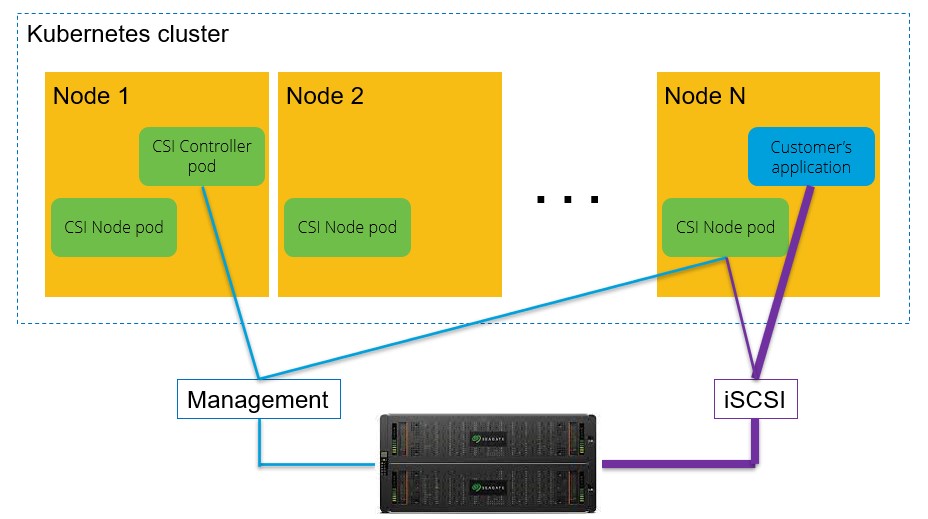

Accounting for future needs is part of the new Bryce Canyon. Specifically, it provides a powerful disaggregated storage capability with easier scalability. It’s an extension of Facebook’s disaggregated rack approach. Instead of taking a traditional server strategy, they have a finite amount of CPU, memory, and storage in each physical server or rack. By disaggregating them out into distinct and optimized compute or storage systems, the company can better optimize for both cost and flexibility. This range of purpose-built storage systems, with one designed for disk and one designed for flash, shows both the application and evolution of that concept to and within data center storage.

Simply put, Facebook’s design enables the company to easily and affordably scale capacity – exponentially — with high levels of predictability, performance, and reliability.

Balancing HDD and Flash in Cloud Data Centers

HDDs are the backbone of Facebook’s data storage infrastructure because they’re the only technology in existence that delivers the capacity and scalability required to store such massive amounts of data. Much of Facebook’s stored data is from the billions of photos users upload every year. Storage and retrieval of online photos is a prime example of an HDD use case since the primary needs are deep capacity, easy scalability, and affordability. Hard drives are able to balance these needs while delivering the response times Facebook users expect.

Flash storage has a role in the data center too. When architecting scalable storage systems, it’s good practice to disaggregate your flash and HDD into optimized systems for different uses. For example, store data that is less frequently accessed onto lower cost storage infrastructure and keep frequently-accessed data on higher performance (more costly) storage. For high-value transaction processing and trading, flash delivers the instantaneous performance needed, and complements the HDDs that continue to provide the essential infrastructure for massive capacity into the foreseeable future.

The design specification for Bryce Canyon is publicly available through the Open Compute Project, and a comprehensive set of hardware design files will soon follow.