Data in Motion: at Seagate’s Technology Advisory Board meeting, industry leaders grappled with how to tap humanity’s greatest resource—data—from edge to core.

The $4.1 trillion question that enterprises are asking these days is: How can we convert data’s new edge-enabled value into new markets?

That quest was front and center at the second annual Technology Advisory Board meetings, built around the theme of “Data in Motion” and hosted by Seagate this month. At Seagate, we like to say that the edge is near—a lot. What we mean by that is that, both spatially and temporally, data’s gravity is shifting to the outer boundaries of the network.

This movement has consequences. Among them: unprecedented generations of staggering volumes of data—and the rise of data’s previously untapped value, which has already been unlocking new use cases.

This potent flow of data brought together leaders from a range of industries that included autonomous vehicles, telco, content delivery networks, media and entertainment, ride share, cloud, and data security. On a sunny October day in Cupertino, California, they puzzled over critical questions concerning data at the edge.

Questions like:

- What exactly do we mean when we say the edge?

- Which multi- or hybrid cloud configurations are best for which use cases?

- How do we manage change (of equipment, for example) at the edge?

- Does the edge need 5G—or is it 5G that needs the edge?

- How can we most securely store and move data at the edge?

- What does the distributed ecosystem mean for the movement of data?

Finding answers to these conundrums is going to be a must for enterprises that want to make use of the previously inaccessible value of data created and processed at or near the edge. Here are some insights offered by Seagate’s Technology Advisory Board participants:

There are good reasons why more and more use cases are moving computing and storage closer to where data is created.

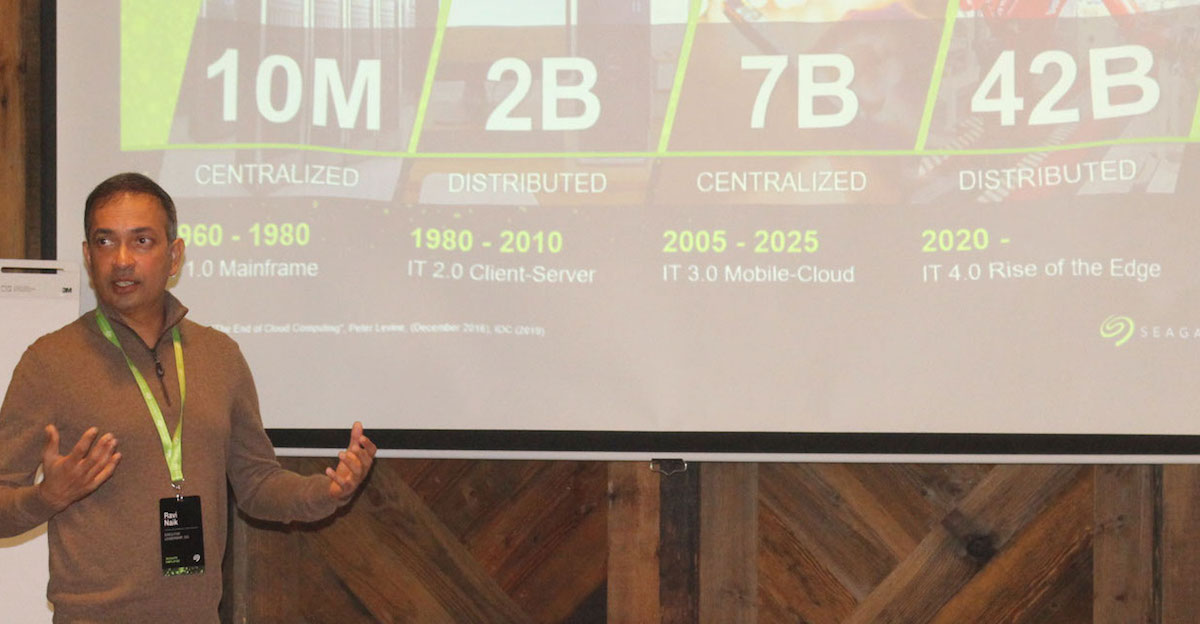

According to Seagate’s CIO Ravi Naik (pictured above), the edge is particularly appealing to telcos, content delivery networks, connected and autonomous vehicles, smart manufacturing, and healthcare. That’s because processing data at the edge can offer gains in areas of cost, latency, and data protection. All of these verticals have growing numbers of use cases that rely on real-time data processing at the edge.

Robin Nijor, VP of Business Development and Marketing at Renovo—the company behind “the first truly open platform for autonomous vehicles”—sees the edge as indispensable to his business. “Without the edge, you can’t process the data that advanced driver-assisted systems (ADAS) and autonomous vehicles (AV) are creating today,” Nijor says. “Without it, you will never be able to process data from next-generation vehicles, which will have 10 times today’s amount of data—at least tens of TBs per vehicle per day.” For Renovo, “the edge is where the intelligence lives to make the data work for us; without it, we could not handle the amount of data produced by fleets of vehicles.”

Robin Nijor, VP of Business Development and Marketing at Renovo—the company behind “the first truly open platform for autonomous vehicles”—sees the edge as indispensable to his business. “Without the edge, you can’t process the data that advanced driver-assisted systems (ADAS) and autonomous vehicles (AV) are creating today,” Nijor says. “Without it, you will never be able to process data from next-generation vehicles, which will have 10 times today’s amount of data—at least tens of TBs per vehicle per day.” For Renovo, “the edge is where the intelligence lives to make the data work for us; without it, we could not handle the amount of data produced by fleets of vehicles.”

The edge is defined by location at the outer boundary of the network—but there’s more to it than that.

While we speak of the edge in singular, it’s a bit of a misnomer for an inherently distributed reality. There isn’t one edge; there are many edges.

Use cases and workloads sometimes define the edge. Renovo counts two types of edges among its use cases, Nijor says. The edge is found both in 1) an ADAS and an AV, and 2) in the garage, where the vehicle offloads customer data. Finally, TAB participant Margaret Chiosi—a telco consultant who is an ex AT&T and Huawei exec—offers another twist on the question, Where is the edge? “Is it located with a service provider or does it reside in the IoT itself?” Ownership, too, will shape and define the edge.

Public cloud continues to be valuable, but the edge means a shift to a multi-cloud and hybrid-cloud environment.

Some data needs real-time activation at the edge (30% of it, per IDC’s estimate), some will be sent to private clouds (which can offer simpler architecture, lower TCO, scalability, and improved security), and some will end up in the public cloud (for example, for long-term archiving of latency-tolerant data and/or for high-volume AI learning). About 57% of enterprises see a hybrid cloud in their future. There is a clear need for the right mix of edge data centers, private clouds, and public cloud. Deciding what goes where depends on use cases, business goals, and enterprises’ constraints.

Moving large amounts of data within current networks is a challenge.

5G can and will help feed the edge, but it might also magnify the dilemma because the bandwidth may not be enough in some data-rich cases (for example in the media and entertainment industry). The best applications will seamlessly obscure and broker the complexities of edge architecture.

Operationalizing the edge is not a walk in the park.

Change management at the edge—swap-outs on account of upgrades, life-cycle issues, failure provisioning—all these operational challenges will need to be addressed by all participants in the ecosystem. The challenge of changing hardware at edge locations, for example, needs solutions.

It’s a lot easier to change out aging equipment at data centers, which are fewer in number, and plan for redundancies, according to Chiosi. “The more workload you put at the edge, the higher the probability of needing hardware changes,” she said. “In many instances at the edge, you can’t afford to let stuff sit idle during the failures since there isn’t a large pool of redundancies. Wireless access makes clustering of edges much easier for redundancy. But high bandwidth may require wireline, which may be a challenge for the edge.”

Data security at the edge (and elsewhere, for that matter) must be a foundational built-in design element.

Whether data is in production, use, storage, or transit, keeping it safe is vital.

On the one hand, obvious physical risk factors exist at edge locations (most of which are unprotected by gates and guards). On the other hand, distributing data may in some cases reduce risk to data, since compromising one node may only mean compromising the data there and then, and not the entire network (unlike at centralized locations). In fact, security concerns might push some workloads to the edge.

As devices enter and exit the network, they must be trusted to participate in the ecosystem. The data itself must be trusted, whether data used in analytics, data transmitted across nodes, or data uploaded to the cloud. Data in motion will be most vulnerable as it traverses networks, so nodes need to be authenticated to communicate securely over disparate networks. Data encryption, keys, protection of and integrity of devices, authenticity, and availability of data are crucial. Chain of trust needs to move up the whole stack from injection point to hardware to software. Hardware root of trust (RoT) is becoming a must-have for the edge. Additionally, industries have various security regulations with which the means of data management at the edge need to be compliant.

All in all, the lively conversations of the Technology Advisory Board attendees brought home a reality animating us at Seagate since we began our work of storing and managing data over 40 years ago: data is a human thing.

It’s humanity’s greatest resource.

To be sure, its flow will shift over years and innovations to come. It will be on us, enterprises that exist thanks to data, to enable humanity to use it optimally—in ways that are safe, smart, and worthwhile.