I was recently speaking to a customer about data reduction technology and I remembered a conversation I had with my mother when I was a teenager. She used to complain how chaotic my bedroom looked, and one time I told her I was illustrating the second law of thermodynamics for my physics class. I was referring to the mess and the tendency of things to evolve towards the state of maximum entropy, or randomness. I have to admit I only used that line once with my mom because it pissed her off and she likened me to an intelligent donkey.

I never expected those early lessons in theoretical physics to be useful in the real world, but as it turns out entropy can be a significant factor in determining solid state drive (SSD) performance. When an SSD employs data reduction technology, the degree of entropy or randomness in the data stream becomes inversely related to endurance and performance — the lower the data entropy, the higher the endurance and performance of the SSD.

Entropy affects data reduction

In this context I am defining entropy as the degree of randomness in data stored by an SSD. Theoretically, minimal or nonexistent entropy would be characterized by data bits of all ones or all zeros, and maximum entropy by a completely random series of ones and zeros. In practice, the entropy of what we often call real-world data falls somewhere in between these two extremes. Today we have hardware engines and software algorithms that can perform deduplication, string substitution and other advanced procedures that can reduce files to a fraction of their original size with no loss of information. The greater the predictability of data — that is, the lower the entropy — the more it can be reduced. In fact, some data can be reduced by 95% or more!

Files such as documents, presentations and email generally contain repeated data patterns with low randomness, so are readily reducible. In contrast, video files (which are usually compressed) and encrypted files (which are inherently random) are poor candidates for data reduction.

A reminder is in order not to confuse random data with random I/O. Random (and sequential) I/Os describe the way data is accessed from the storage media. The mix of random vs. sequential I/Os also influences performance, but in a different way than entropy, described in my blog Teasing out the lies in SSD benchmarking.

Why data reduction matters in an SSD

The NAND flash memory inside SSDs is very sensitive to the cumulative amount of data written to it. The more data written to flash, the shorter the SSDs service life and the sooner its performance will degrade. Writing less data, therefore, means better endurance and performance. You can read more about this topic in my two blogs Can data reduction technology substitute for TRIM and Write Amplification – Part 2.

Real-world examples in client computing

Take an encrypted text document. The file started out as mostly text with some background formatting data. All things considered, the original text file is fairly simple and organized. The encryption, by design, turns the data into almost completely random gibberish with almost no predictability to the file. The original text file, then, has low entropy and the encrypted file high entropy.

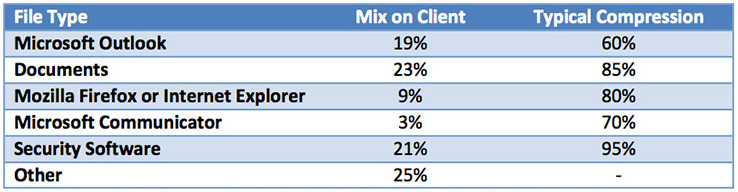

Intel Labs examined entropy in the context of compressibility as background research to support its Intel SSD 520 Series. The following chart summarizes Intel’s findings for the kinds of data commonly found on client storage drives, and the amount of compression that might be achieved:

Source: Intel, Data Compression in the Intel Solid-State Drive 520 Series, 2012

Source: Intel, Data Compression in the Intel Solid-State Drive 520 Series, 2012

According to Intel, 75% of the file types observed can be typically compressed 60% or more. Granted, the kind of files found on drives varies widely according to the type of user. Home systems might contain more compressed audio and video, for example — poor candidates, as we mentioned, for data reduction. But after examining hundreds of systems from a wide range of environments, Seagate estimates that the entropy of typical user data averages between 50-70%, suggesting that many users would see at least a moderate improvement in performance and endurance from data reduction because most data can be reduced before it is written to the SSD.

Real-world examples in the enterprise

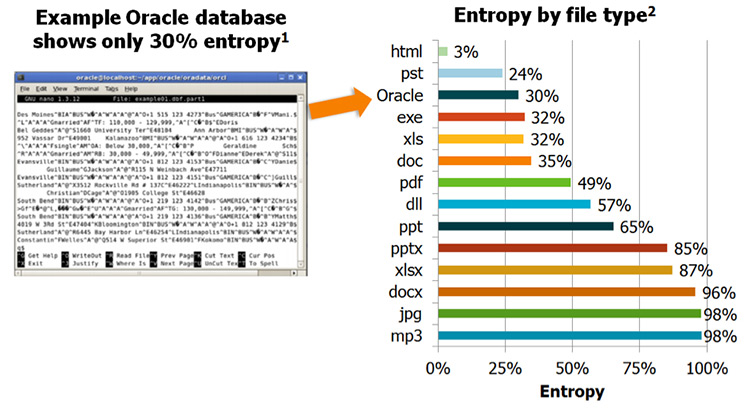

Enterprise IT managers might be surprised at the extent to which data reduction technology can increase workload performance. While gauging the level of improvement with any precision would require data-specific benchmarking, sample data can provide useful insights. Seagate examined the entropy of various data types, shown in the chart below. I found the high reducibility of the Oracle database file very surprising because I had previously been told by database engineers that I should expect higher entropy. I later came to understand these enterprise databases are designed for speed, not capacity optimization. Therefore it is faster to store the data in its raw form rather than use a software compression application to compress and decompress the database on the fly and slow it down.

1. http://www.thesmarterwaytofaster.com/pdf.php?c=LSI_WP_NytroWD-DuraWrite_040412

1. http://www.thesmarterwaytofaster.com/pdf.php?c=LSI_WP_NytroWD-DuraWrite_040412

2. Measured by WinZip

Putting it all together

The chief goals of PC and laptop users and IT managers have long been, and remain, to maximize the performance and lifespan of storage devices — SSDs and HDDs — and at a competitive price point. The challenge for SSD users is to find a device that delivers on all three fronts. Seagate’s SandForce DuraWrite technology helps give SSD users exactly what they want. By reducing the amount of data written to flash memory, DuraWrite increases SSD endurance and performance without additional cost — even if it doesn’t help organize your teenagers bedroom.

Leave A Comment